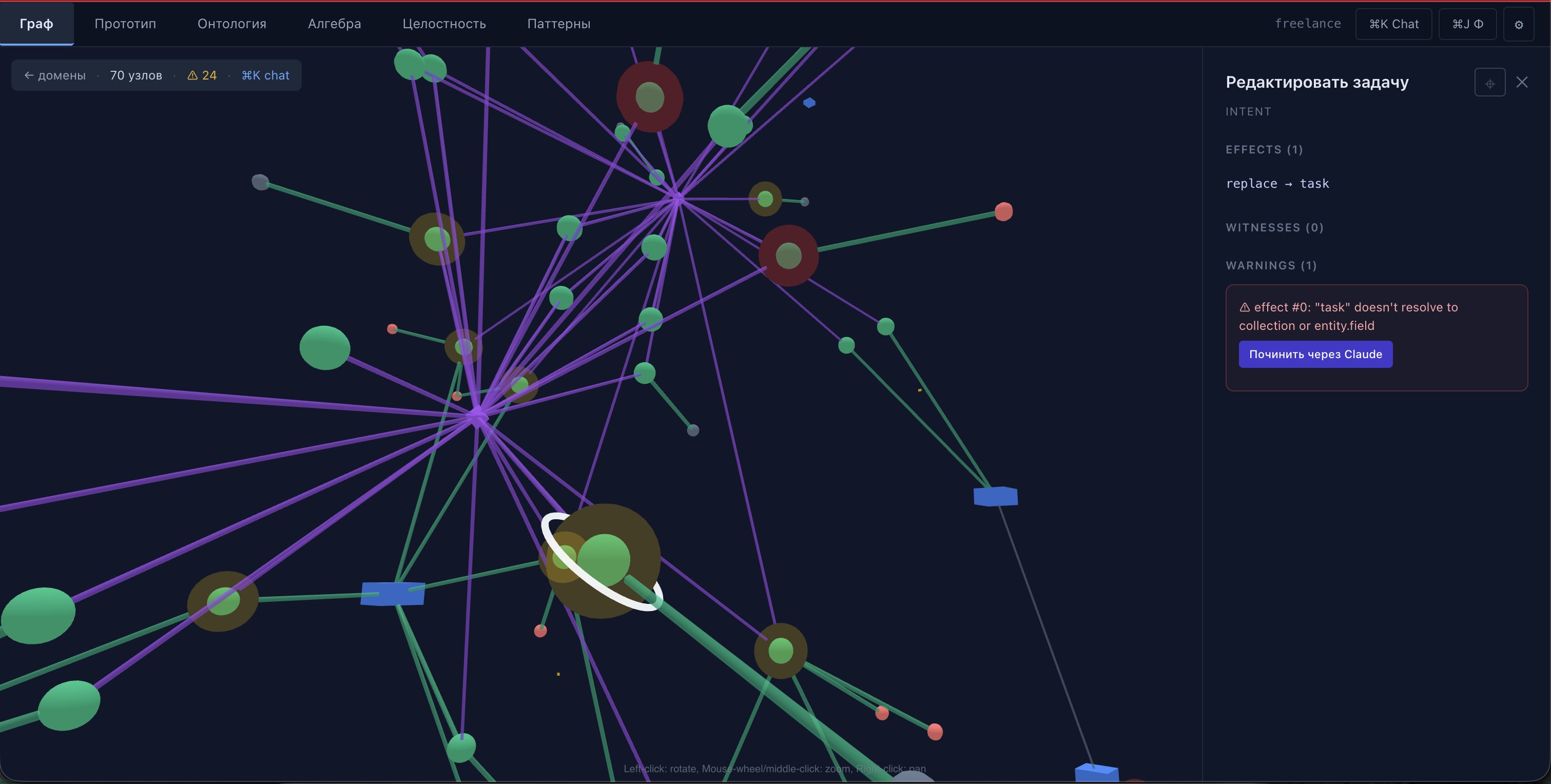

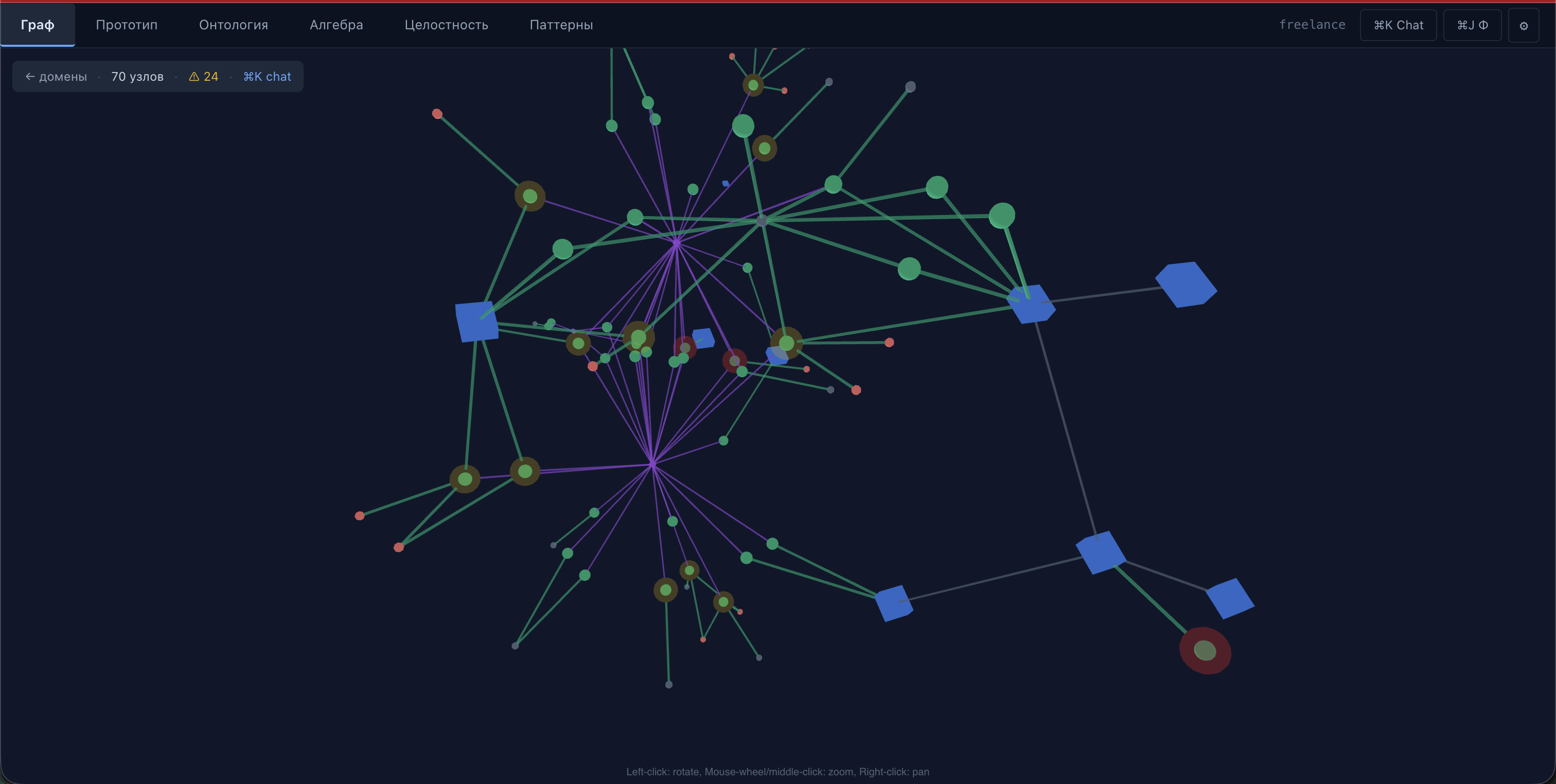

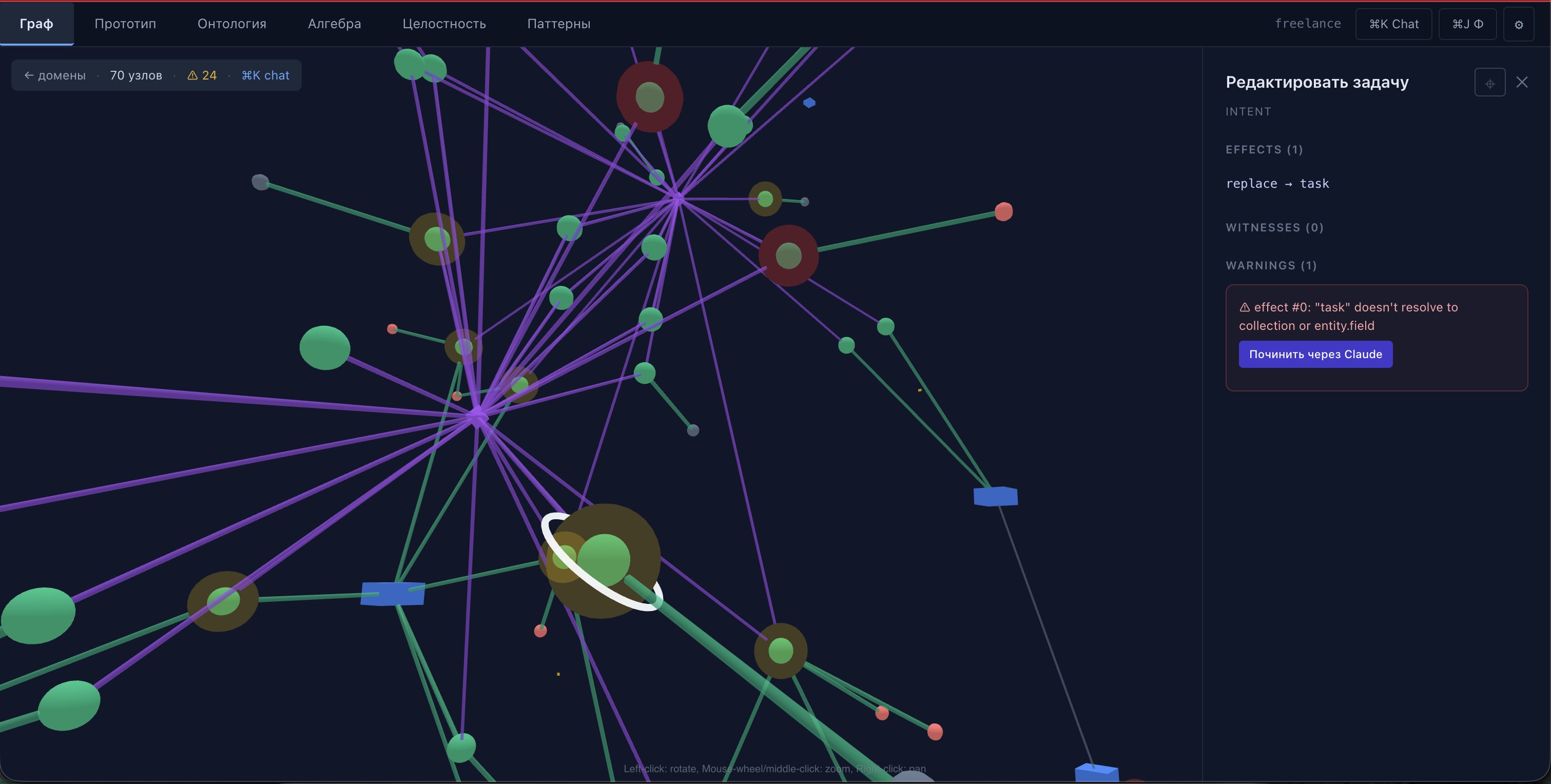

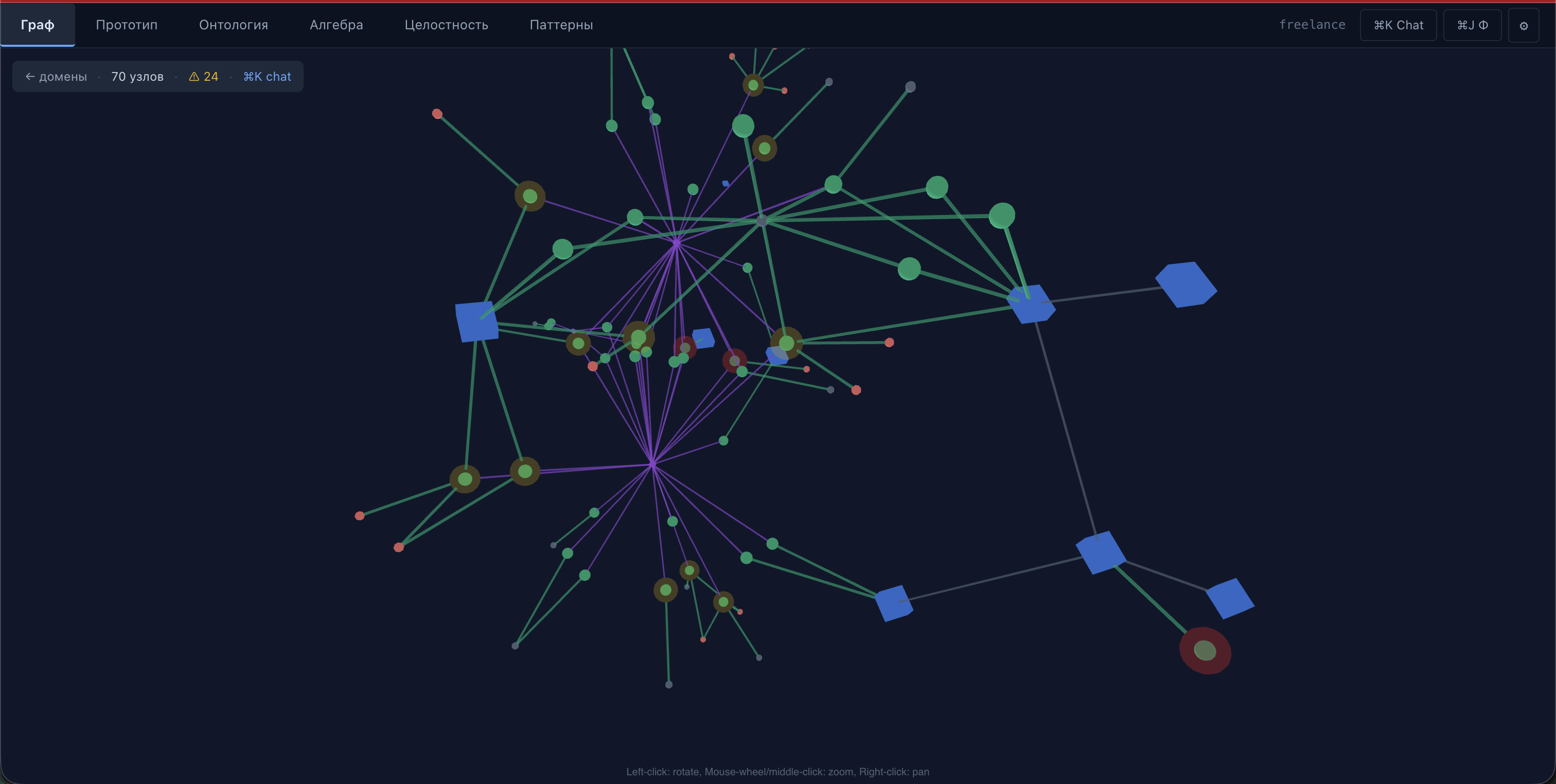

Ontology graph

Entities, roles, ownership, references.

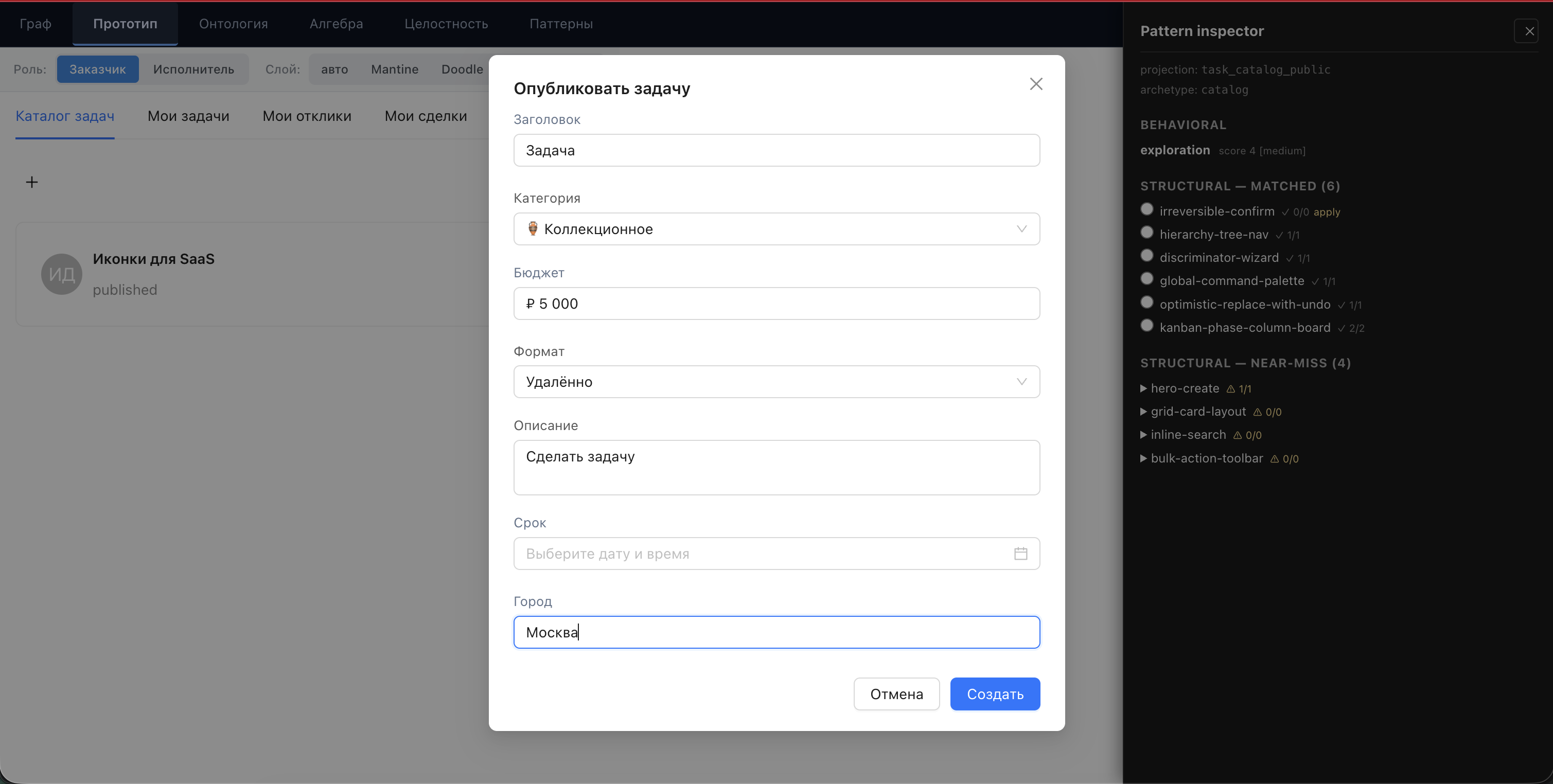

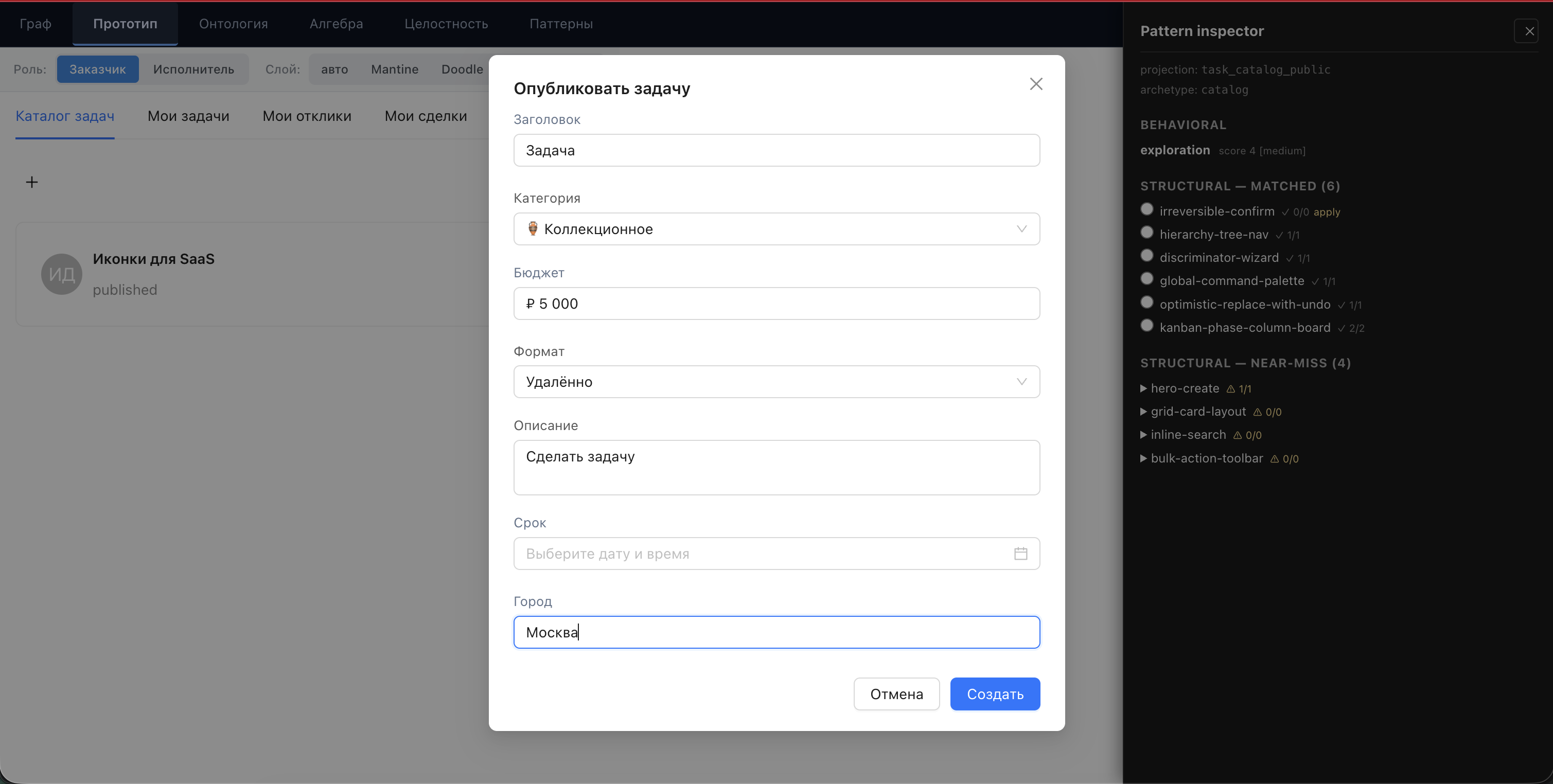

Live prototype

Projections render inline on every change.

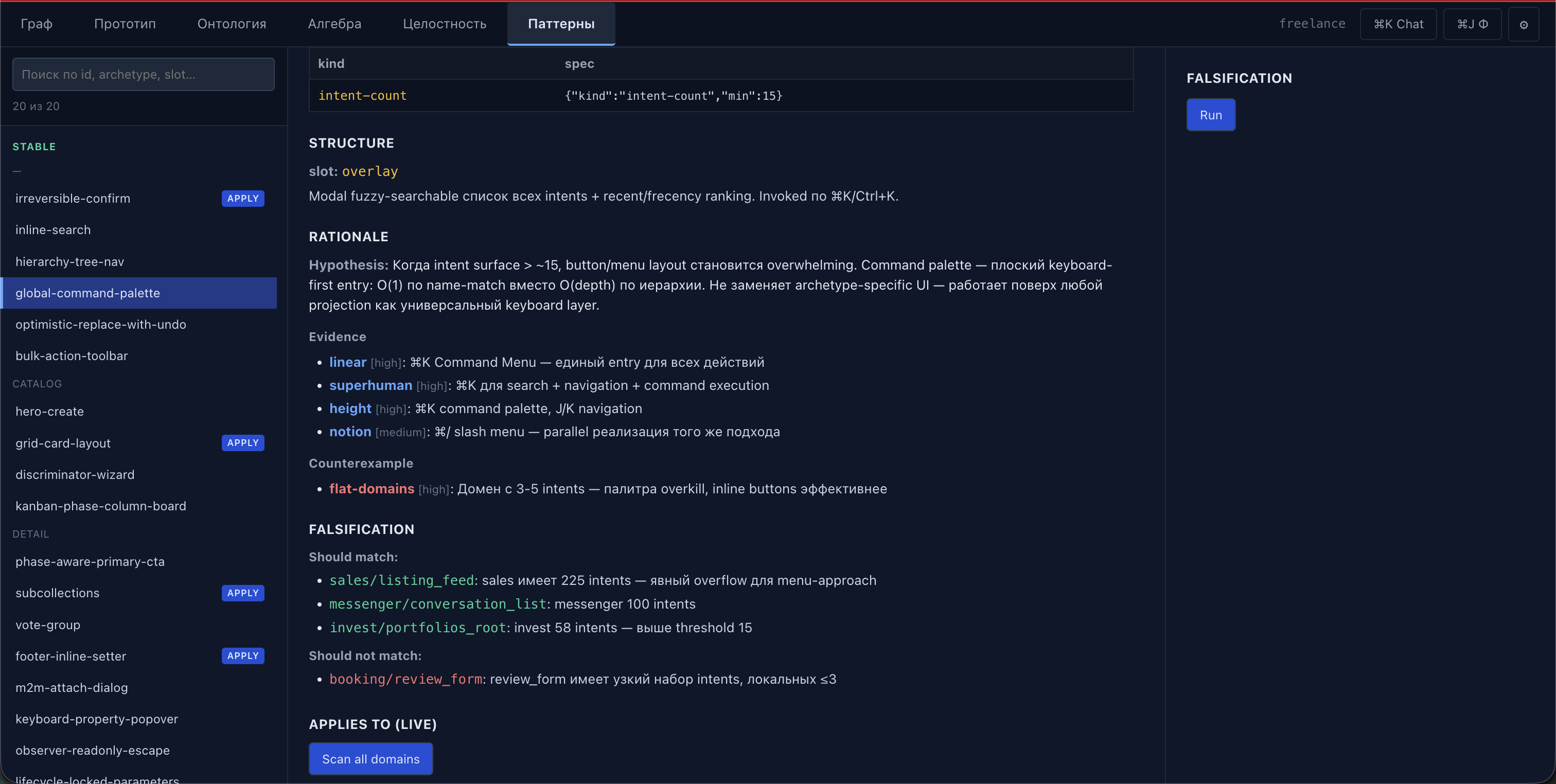

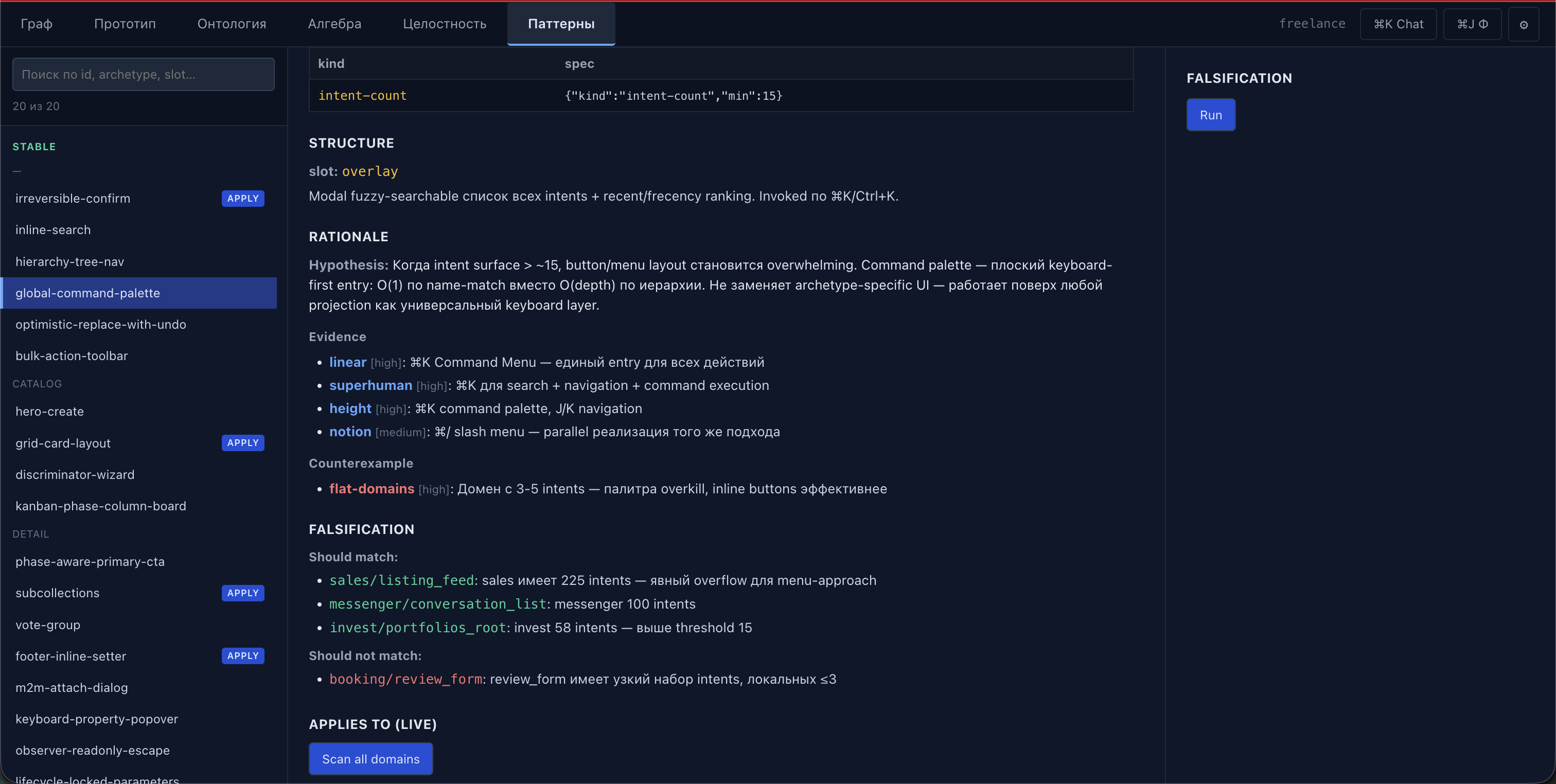

Pattern Inspector

Off / Preview / Commit for stable patterns.

At the OpenAPI / JSON-LD level. One artifact — four readers: pixels, voice, agent API, document. LLM helps during authoring; at runtime it's not there.

Entities, fields with read/write matrix, roles, invariants, rules.

Declarative particles: ownerRole, requiredFields, conditions, effects.

Which intents form one screen, one dialog, one page.

Stream of confirmed effects. World isn't stored — it's fold(Φ).

viewerWorld, scoped by role.Renderer + adapter + Token Bridge. Mantine, shadcn, Apple-glass, AntD.

ProjectionRendererV2Speech-script of turns. JSON for voice-agents, SSML for TTS, plain for IVR.

/api/voice/:domain/:projectionJSON-schema of intents, viewer-scoped world, exec. Preapproval guard for limits.

/api/agent/:domain/{schema,world,exec}Structured graph: sections, tables, fields, badges. HTML for print, JSON for archives.

/api/document/:domain/:projection

Entities, roles, ownership, references.

Projections render inline on every change.

Off / Preview / Commit for stable patterns.

The artifact is a function of ontology, intents and projection. The UI is a function of the artifact. Everything computable, everything reproducible. No runtime LLM.